gRPC 微服务通信实战教程

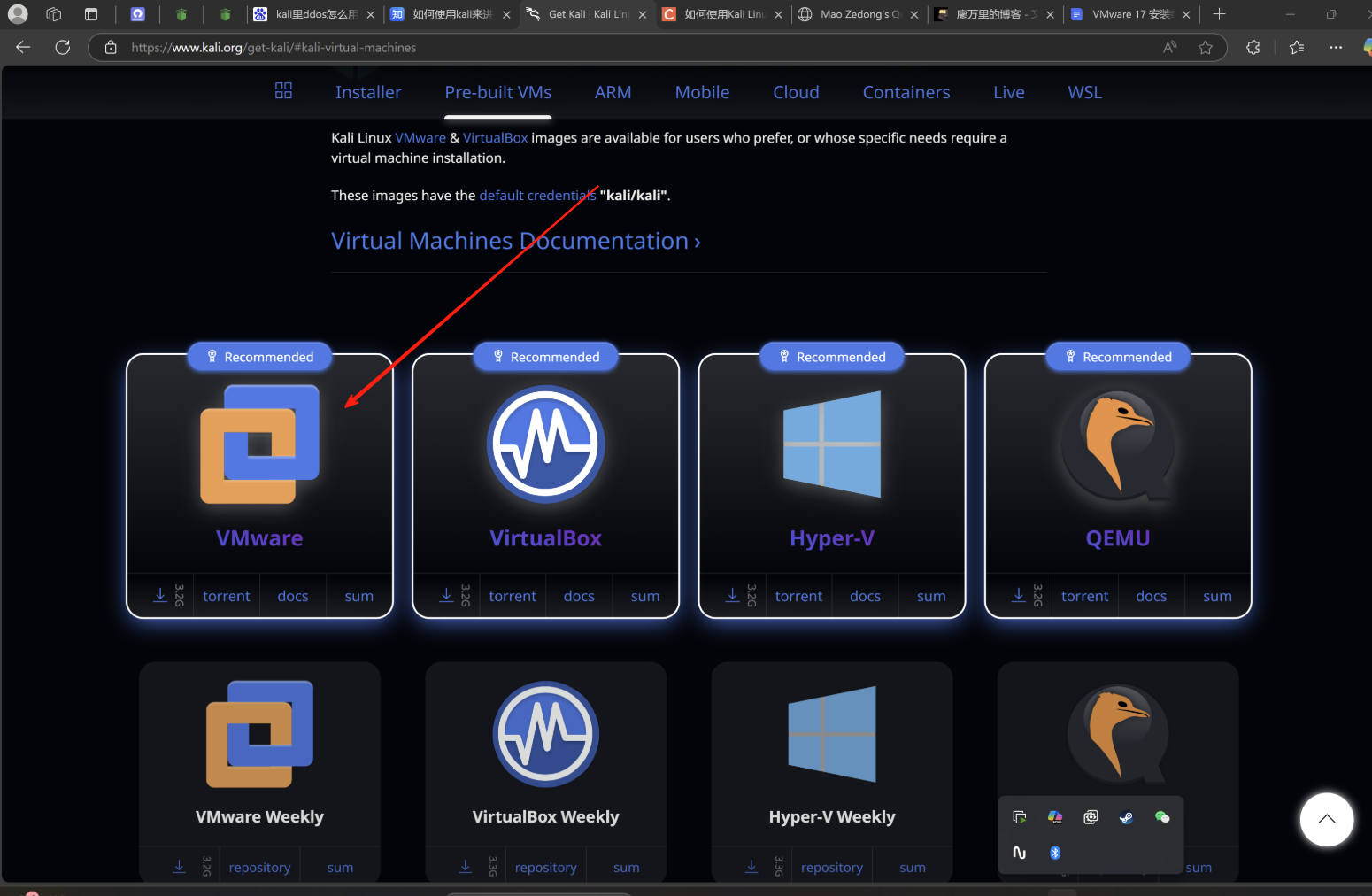

gRPC 是 Google 开源的高性能 RPC 框架,基于 HTTP/2 和 Protocol Buffers,支持四种通信模式,是微服务架构中服务间通信的利器。本文从基础概念到实战应用,全面解析 gRPC 的核心原理与最佳实践。## 一、gRPC 基础概念 gRPC(Remote Procedure Call)是一个高性能、开源的通用 RPC 框架,由 Google 于 2015 年开源。它基于 HTTP/2 协议传输,使用 Protocol Buffers 作为接口定义语言和数据序列化格式。 ### 1.1 核心特性 gRPC 具有以下核心优势: - **高性能**:基于 HTTP/2 多路复用,支持双向流 - **强类型**:通过 Proto 文件定义接口,编译时检查 - **多语言支持**:支持 C++、Java、Python、Go 等 10+ 语言 - **代码生成**:自动生成客户端和服务端代码 ### 1.2 工作原理 gRPC 的工作流程可以概括为: 1. 定义 Proto 文件,描述服务接口和消息结构 2. 编译 Proto 文件,生成服务端和客户端代码 3. 服务端实现接口并启动服务 4. 客户端通过 Stub 调用远程方法 ## 二、Protocol Buffers 详解 Protocol Buffers(简称 ProtoBuf)是 Google 的数据序列化格式,相比 JSON/XML 更小、更快、更简单。 ### 2.1 基本语法

syntax = "proto3";

package user;

// 定义消息结构

message User {

int32 id = 1;

string name = 2;

string email = 3;

repeated string roles = 4; // 列表类型

}

// 定义服务

service UserService {

rpc GetUser(GetUserRequest) returns (User);

rpc CreateUser(User) returns (User);

}

message GetUserRequest {

int32 user_id = 1;

}

### 2.2 数据类型映射

ProtoBuf 支持丰富的数据类型:

| Proto 类型 | Python 类型 | 说明 |

|-----------|------------|------|

| int32/int64 | int | 整型 |

| float/double | float | 浮点型 |

| bool | bool | 布尔型 |

| string | str | 字符串 |

| bytes | bytes | 字节序列 |

| repeated | list | 列表 |

### 2.3 序列化优势

ProtoBuf 的序列化优势显著:

- **体积小**:二进制格式,比 JSON 小 3-10 倍

- **速度快**:序列化/反序列化速度比 JSON 快 5-100 倍

- **向后兼容**:支持字段编号,方便协议演进

## 三、四种通信模式

gRPC 支持四种通信模式,适应不同的业务场景。

### 3.1 一元 RPC(Unary RPC)

最简单的模式:客户端发送一个请求,服务端返回一个响应。

service OrderService {

rpc GetOrder(OrderRequest) returns (Order);

}

# 服务端实现

class OrderServicer(order_pb2_grpc.OrderServiceServicer):

def GetOrder(self, request, context):

order = get_order_from_db(request.order_id)

return order_pb2.Order(

id=order.id,

product_name=order.product_name,

price=order.price

)

# 客户端调用

def get_order(stub, order_id):

request = order_pb2.OrderRequest(order_id=order_id)

response = stub.GetOrder(request)

return response

适用场景:简单的查询、创建操作,如获取用户信息、创建订单。

### 3.2 服务端流式 RPC

客户端发送一个请求,服务端返回一个流,逐步发送多个响应。

service LogService {

rpc StreamLogs(LogRequest) returns (stream LogEntry);

}

# 服务端实现

class LogServicer(log_pb2_grpc.LogServiceServicer):

def StreamLogs(self, request, context):

for log in read_logs(request.service_name):

yield log_pb2.LogEntry(

timestamp=log.timestamp,

level=log.level,

message=log.message

)

# 客户端调用

def stream_logs(stub, service_name):

request = log_pb2.LogRequest(service_name=service_name)

for log_entry in stub.StreamLogs(request):

print(f"[{log_entry.level}] {log_entry.message}")

适用场景:大数据传输、实时推送,如日志流、股票行情。

### 3.3 客户端流式 RPC

客户端发送一个流,服务端接收完所有请求后返回一个响应。

service UploadService {

rpc UploadFile(stream FileChunk) returns (UploadResponse);

}

# 服务端实现

class UploadServicer(upload_pb2_grpc.UploadServiceServicer):

def UploadFile(self, request_iterator, context):

file_data = b''

filename = None

for chunk in request_iterator:

file_data += chunk.data

filename = chunk.filename

save_file(filename, file_data)

return upload_pb2.UploadResponse(

success=True,

message=f"File {filename} uploaded"

)

# 客户端调用

def upload_file(stub, filepath):

def generate_chunks():

with open(filepath, 'rb') as f:

while True:

chunk = f.read(1024 * 64) # 64KB chunks

if not chunk:

break

yield upload_pb2.FileChunk(

filename=os.path.basename(filepath),

data=chunk

)

response = stub.UploadFile(generate_chunks())

return response

适用场景:文件上传、批量数据提交、聚合计算。

### 3.4 双向流式 RPC

客户端和服务端同时使用流,实现全双工通信。

service ChatService {

rpc Chat(stream ChatMessage) returns (stream ChatMessage);

}

# 服务端实现

class ChatServicer(chat_pb2_grpc.ChatServiceServicer):

def Chat(self, request_iterator, context):

# 接收客户端消息并回复

def send_responses():

for message in request_iterator:

# 处理消息

response = process_message(message)

yield chat_pb2.ChatMessage(

user="Server",

content=response,

timestamp=int(time.time())

)

return send_responses()

# 客户端调用(双向流需要使用线程或异步)

def chat(stub):

def generate_messages():

while True:

msg = input("You: ")

if msg == "quit":

break

yield chat_pb2.ChatMessage(

user="Client",

content=msg,

timestamp=int(time.time())

)

responses = stub.Chat(generate_messages())

for response in responses:

print(f"{response.user}: {response.content}")

适用场景:实时聊天、游戏对战、协同编辑。

## 四、服务治理

在微服务架构中,服务治理是保证系统稳定性的关键。

### 4.1 服务注册与发现

gRPC 通常配合服务注册中心使用:

# 使用 Consul 进行服务注册

import consul

def register_service(consul_client, service_name, service_id, address, port):

consul_client.agent.service.register(

name=service_name,

service_id=service_id,

address=address,

port=port,

check=consul.Check.grpc(address, port, "10s")

)

# 服务发现

def discover_service(consul_client, service_name):

services = consul_client.health.service(service_name, passing=True)

if services:

return random.choice(services[1])

return None

### 4.2 健康检查

gRPC 支持标准的健康检查协议:

// 标准 gRPC 健康检查

service Health {

rpc Check(HealthCheckRequest) returns (HealthCheckResponse);

}

from grpc_health.v1 import health_pb2, health_pb2_grpc

class HealthServicer(health_pb2_grpc.HealthServicer):

def Check(self, request, context):

# 检查服务健康状态

if is_healthy():

return health_pb2.HealthCheckResponse(

status=health_pb2.HealthCheckResponse.SERVING

)

return health_pb2.HealthCheckResponse(

status=health_pb2.HealthCheckResponse.NOT_SERVING

)

### 4.3 超时与重试

合理的超时和重试策略可以防止级联故障:

# 客户端设置超时

def call_with_timeout(stub, timeout_seconds=5):

try:

response = stub.GetUser(

user_pb2.GetUserRequest(user_id=1),

timeout=timeout_seconds

)

return response

except grpc.RpcError as e:

if e.code() == grpc.StatusCode.DEADLINE_EXCEEDED:

print("Request timed out")

return None

# 重试策略

def call_with_retry(stub, max_retries=3):

for attempt in range(max_retries):

try:

return stub.GetUser(user_pb2.GetUserRequest(user_id=1))

except grpc.RpcError as e:

if attempt == max_retries - 1:

raise

time.sleep(2 ** attempt) # 指数退避

## 五、负载均衡

gRPC 支持多种负载均衡策略。

### 5.1 客户端负载均衡

from grpc import aio

from grpc_channelz.v1 import channelz

# 使用 round_robin 策略

def create_channel_with_lb(addresses):

# 创建带负载均衡的 channel

channel = aio.insecure_channel(

'dns:///my-service',

options=[

('grpc.lb_policy_name', 'round_robin'),

('grpc.enable_retries', 1),

]

)

return channel

# 自定义负载均衡

class WeightedRoundRobinBalancer:

def __init__(self, servers, weights):

self.servers = servers

self.weights = weights

self.current_weights = [0] * len(servers)

def get_next_server(self):

# 加权轮询算法

total = sum(self.weights)

for i in range(len(self.weights)):

self.current_weights[i] += self.weights[i]

selected = self.current_weights.index(max(self.current_weights))

self.current_weights[selected] -= total

return self.servers[selected]

### 5.2 服务端负载均衡

使用 Nginx 或 Envoy 作为代理:

# Nginx gRPC 配置示例

upstream grpc_servers {

server 192.168.1.10:50051;

server 192.168.1.11:50051;

server 192.168.1.12:50051;

}

server {

listen 50051 http2;

location / {

grpc_pass grpc_servers;

grpc_connect_timeout 5s;

grpc_read_timeout 30s;

}

}

### 5.3 常用负载均衡策略

| 策略 | 特点 | 适用场景 |

|-----|------|---------|

| Round Robin | 轮询分发 | 服务器性能相近 |

| Weighted Round Robin | 加权轮询 | 服务器性能差异大 |

| Least Connection | 最少连接 | 长连接场景 |

| Consistent Hash | 一致性哈希 | 有状态服务 |

## 六、gRPC 与 REST 对比

### 6.1 技术层面对比

| 维度 | gRPC | REST |

|-----|------|------|

| 协议 | HTTP/2 | HTTP/1.1 |

| 数据格式 | ProtoBuf(二进制) | JSON(文本) |

| 性能 | 高(二进制+多路复用) | 中等 |

| 流式支持 | 原生支持 | 需额外实现 |

| 浏览器支持 | 需要 gRPC-Web | 原生支持 |

| 调试 | 需要工具 | curl 即可 |

### 6.2 性能对比

# 性能测试示例

import time

import requests

import grpc

def benchmark_rest_vs_grpc():

# REST 测试

start = time.time()

for _ in range(1000):

requests.get("http://localhost:8000/api/users/1")

rest_time = time.time() - start

# gRPC 测试

channel = grpc.insecure_channel('localhost:50051')

stub = user_pb2_grpc.UserServiceStub(channel)

start = time.time()

for _ in range(1000):

stub.GetUser(user_pb2.GetUserRequest(user_id=1))

grpc_time = time.time() - start

print(f"REST: {rest_time:.2f}s")

print(f"gRPC: {grpc_time:.2f}s")

print(f"gRPC 快 {rest_time/grpc_time:.2f} 倍")

典型测试结果:gRPC 比 REST 快 3-7 倍,数据传输量减少 60-80%。

### 6.3 选择建议

**使用 gRPC 的场景:**

- 微服务内部通信

- 对性能要求高

- 需要流式传输

- 多语言技术栈

**使用 REST 的场景:**

- 公开 API

- 浏览器直连

- 需要简单调试

- 对性能要求不高

## 七、实战案例:电商订单系统

下面以电商订单系统为例,演示 gRPC 的完整应用。

### 7.1 定义 Proto 文件

syntax = "proto3";

package ecommerce;

// 订单服务

service OrderService {

// 创建订单(一元)

rpc CreateOrder(CreateOrderRequest) returns (Order);

// 获取订单流(服务端流)

rpc StreamOrders(StreamOrdersRequest) returns (stream Order);

// 批量创建订单(客户端流)

rpc BatchCreateOrders(stream CreateOrderRequest) returns (BatchCreateResponse);

// 订单状态订阅(双向流)

rpc SubscribeOrderStatus(stream SubscribeRequest) returns (stream OrderStatus);

}

message CreateOrderRequest {

int32 user_id = 1;

repeated OrderItem items = 2;

string shipping_address = 3;

}

message OrderItem {

int32 product_id = 1;

int32 quantity = 2;

float price = 3;

}

message Order {

int32 id = 1;

int32 user_id = 2;

repeated OrderItem items = 3;

float total_amount = 4;

string status = 5;

int64 created_at = 6;

}

message StreamOrdersRequest {

int32 user_id = 1;

int32 limit = 2;

}

message BatchCreateResponse {

int32 success_count = 1;

int32 failed_count = 2;

repeated int32 order_ids = 3;

}

message SubscribeRequest {

int32 order_id = 1;

}

message OrderStatus {

int32 order_id = 1;

string status = 2;

int64 timestamp = 3;

string message = 4;

}

### 7.2 服务端实现

import grpc

from concurrent import futures

import time

import ecommerce_pb2

import ecommerce_pb2_grpc

class OrderServiceServicer(ecommerce_pb2_grpc.OrderServiceServicer):

def __init__(self):

self.orders = {}

self.order_counter = 0

def CreateOrder(self, request, context):

# 创建订单

self.order_counter += 1

order_id = self.order_counter

total = sum(item.price * item.quantity for item in request.items)

order = ecommerce_pb2.Order(

id=order_id,

user_id=request.user_id,

items=request.items,

total_amount=total,

status="CREATED",

created_at=int(time.time())

)

self.orders[order_id] = order

# 发布订单创建事件

publish_event("order.created", order)

return order

def StreamOrders(self, request, context):

# 流式返回用户订单

count = 0

for order_id, order in self.orders.items():

if order.user_id == request.user_id:

if count >= request.limit:

break

yield order

count += 1

time.sleep(0.1) # 模拟延迟

def BatchCreateOrders(self, request_iterator, context):

# 批量创建订单

success_count = 0

failed_count = 0

order_ids = []

for request in request_iterator:

try:

order = self.CreateOrder(request, context)

order_ids.append(order.id)

success_count += 1

except Exception as e:

failed_count += 1

print(f"Failed to create order: {e}")

return ecommerce_pb2.BatchCreateResponse(

success_count=success_count,

failed_count=failed_count,

order_ids=order_ids

)

def SubscribeOrderStatus(self, request_iterator, context):

# 双向流:订单状态订阅

subscribed_orders = set()

def receive_requests():

for request in request_iterator:

subscribed_orders.add(request.order_id)

# 启动接收线程

import threading

thread = threading.Thread(target=receive_requests)

thread.start()

# 发送状态更新

while context.is_active():

for order_id in subscribed_orders:

if order_id in self.orders:

order = self.orders[order_id]

yield ecommerce_pb2.OrderStatus(

order_id=order_id,

status=order.status,

timestamp=int(time.time()),

message=f"Order {order_id} status: {order.status}"

)

time.sleep(5)

def serve():

server = grpc.server(futures.ThreadPoolExecutor(max_workers=10))

ecommerce_pb2_grpc.add_OrderServiceServicer_to_server(

OrderServiceServicer(), server

)

server.add_insecure_port('[::]:50051')

print("Order Service started on port 50051")

server.start()

server.wait_for_termination()

if __name__ == '__main__':

serve()

### 7.3 客户端实现

import grpc

import ecommerce_pb2

import ecommerce_pb2_grpc

class OrderClient:

def __init__(self, host='localhost', port=50051):

self.channel = grpc.insecure_channel(f'{host}:{port}')

self.stub = ecommerce_pb2_grpc.OrderServiceStub(self.channel)

def create_order(self, user_id, items, address):

# 创建订单项

order_items = [

ecommerce_pb2.OrderItem(

product_id=item['product_id'],

quantity=item['quantity'],

price=item['price']

) for item in items

]

request = ecommerce_pb2.CreateOrderRequest(

user_id=user_id,

items=order_items,

shipping_address=address

)

response = self.stub.CreateOrder(request)

print(f"Order created: {response.id}, Total: {response.total_amount}")

return response

def stream_orders(self, user_id, limit=10):

request = ecommerce_pb2.StreamOrdersRequest(

user_id=user_id,

limit=limit

)

print(f"Streaming orders for user {user_id}:")

for order in self.stub.StreamOrders(request):

print(f" Order {order.id}: {order.status}, ${order.total_amount}")

def batch_create_orders(self, orders_data):

def generate_requests():

for data in orders_data:

order_items = [

ecommerce_pb2.OrderItem(

product_id=item['product_id'],

quantity=item['quantity'],

price=item['price']

) for item in data['items']

]

yield ecommerce_pb2.CreateOrderRequest(

user_id=data['user_id'],

items=order_items,

shipping_address=data['address']

)

response = self.stub.BatchCreateOrders(generate_requests())

print(f"Batch create: {response.success_count} success, {response.failed_count} failed")

return response

# 使用示例

if __name__ == '__main__':

client = OrderClient()

# 创建单个订单

order = client.create_order(

user_id=1,

items=[

{'product_id': 101, 'quantity': 2, 'price': 99.9},

{'product_id': 102, 'quantity': 1, 'price': 199.0}

],

address="北京市朝阳区xxx"

)

# 流式获取订单

client.stream_orders(user_id=1)

# 批量创建订单

batch_data = [

{

'user_id': 2,

'items': [{'product_id': 103, 'quantity': 1, 'price': 49.9}],

'address': "上海市浦东新区xxx"

},

{

'user_id': 3,

'items': [{'product_id': 104, 'quantity': 3, 'price': 29.9}],

'address': "广州市天河区xxx"

}

]

client.batch_create_orders(batch_data)

### 7.4 项目结构

```

ecommerce-grpc/

├── protos/

│ └── ecommerce.proto

├── generated/

│ ├── ecommerce_pb2.py

│ └── ecommerce_pb2_grpc.py

├── server/

│ └── order_service.py

├── client/

│ └── order_client.py

├── requirements.txt

└── README.md

```

## 八、总结

gRPC 作为现代微服务通信的核心技术,具有以下关键优势:

1. **高性能通信**:基于 HTTP/2 和 ProtoBuf,传输效率远超传统 REST API

2. **灵活的通信模式**:四种通信模式覆盖绝大多数业务场景

3. **强类型约束**:Proto 文件即文档,编译时检查,减少运行时错误

4. **完善的生态**:服务治理、负载均衡、健康检查一应俱全

在实际应用中,建议:

- 内部微服务优先使用 gRPC

- 公开 API 保持 REST 兼容

- 合理设置超时和重试策略

- 结合服务网格(如 Istio)实现更完善的服务治理

gRPC 的学习曲线虽然比 REST 稍陡,但掌握后将极大提升微服务架构的通信效率和开发体验。希望本文能为你的 gRPC 实战之路提供清晰的指引。

本文链接:https://www.kkkliao.cn/?id=969 转载需授权!

版权声明:本文由廖万里的博客发布,如需转载请注明出处。

.png) 手机流量卡

手机流量卡 免费领卡

免费领卡 号卡合伙人

号卡合伙人 产品服务

产品服务 关于本站

关于本站